It’s 6 a.m. again, coffee in hand, livestream camera on, and I’m talking to a community of digital pathology professionals around the world who, like me, think Saturday mornings are a great time to discuss AI, cancer grading, and T-cell imaging.

Welcome to another episode of DigiPath Digest — where we explore what’s new in digital pathology and how artificial intelligence is quietly (and not-so-quietly) transforming our work.

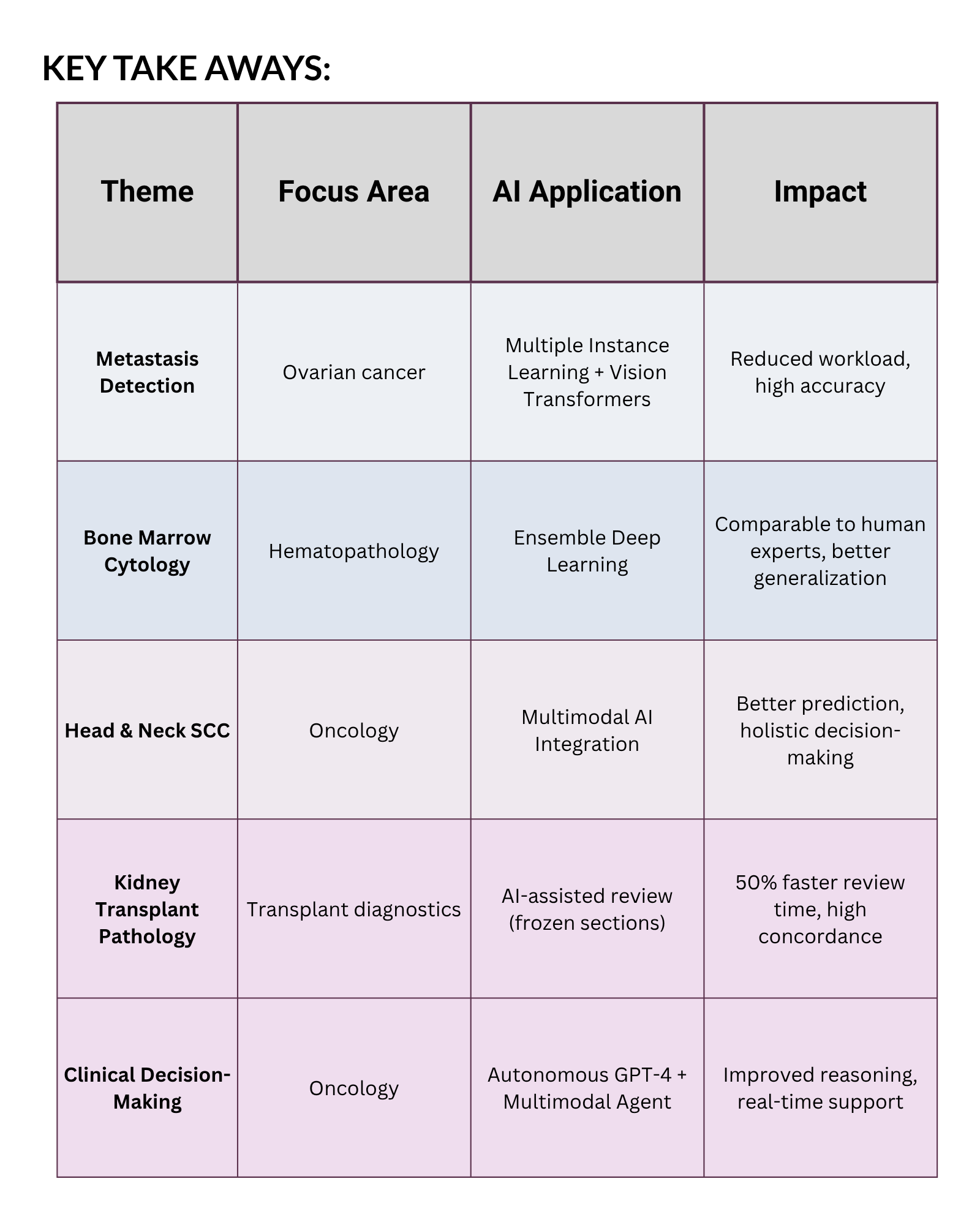

This week’s session covered five studies that show just how far we’ve come — from detecting tiny metastases in ovarian cancer to AI-assisted kidney transplant pathology, and even autonomous GPT-4–based clinical agents that can make oncology decisions.

And yes — if you were wondering — I did wear my signature histology-themed earrings again. This time it was the multinucleated giant cells, because nothing says “Saturday morning pathology chat” like a bit of cytology-themed jewelry.

AI for Ovarian Cancer Metastasis Detection — Multiple Instance Learning in Action

We started the session with a study that’s a dream use case for AI: detecting ovarian carcinoma metastases in lymph nodes and peritoneum.

For context, these samples often include large areas of non-tumor tissue. Manually screening them is time-consuming and mentally exhausting — the kind of task where AI can shine.

Researchers at Leeds Teaching Hospital used an attention-based multiple instance learning (MIL) model combined with a vision transformer foundation model to classify whole-slide images.

The results were almost too good to believe:

- AUC: 0.998 (near-perfect discrimination)

- Balanced accuracy: 100% on the ovarian carcinoma dataset

- Lymph node AUC: 0.963, balanced accuracy: 98%

This is exactly the kind of “AI pre-screening” that makes sense — the model flags likely positive slides, saving pathologists from reviewing hundreds of negatives.

But here’s the important part:

AI doesn’t diagnose. It prioritizes. We still confirm. That’s where the human layer matters.

I love seeing this kind of collaboration — AI doing the first pass, and pathologists doing the final call. It’s how we move toward smarter, more sustainable workflows without losing our expertise in the process.

AI for Bone Marrow Morphometry — Ensemble Models That Think Like a Team

Next up, we talked about one of the hardest areas of diagnostic pathology: bone marrow aspirate analysis.

Anyone who’s spent time identifying every cell type under oil immersion knows the complexity — there’s a fine line between promyelocytes, myelocytes, and blasts, and it’s easy to cross it.

A team from UCSF developed a snapshot ensemble deep learning model trained on over 30,000 images from morphologically normal marrows. They then tested it on external data from MSK, including both normal and diseased cases.

The results were compelling:

- The model outperformed prior approaches in accuracy.

- It generalized well across datasets.

- Its performance was comparable to or even better than human experts in certain cell classes.

Why does that matter? Because ensemble models don’t rely on a single perspective — they aggregate multiple trained models, just like a group of pathologists discussing a tough case.

As someone who’s seen plenty of “AI fails” on external data, this generalizability is huge.

It tells us that AI in hematopathology isn’t just a lab curiosity anymore — it’s a tool we can actually start to trust.

AI in Head and Neck Squamous Cell Carcinoma (HNSCC) — The Power of Multimodal Integration

Then we moved to head and neck squamous cell carcinoma (HNSCC), a notoriously complex disease.

A review article outlined how AI is now being used to predict extranodal extension, tumor burden, and even radiotherapy toxicity.

But the most exciting part? Multimodal AI models that combine:

- Histopathology images 🧫

- Radiologic data 🩻

- Genomic information 🧬

- Clinical metadata 📋

These models outperform single-source (unimodal) systems because they don’t look at just one piece of the puzzle — they integrate everything.

Imagine an AI that not only recognizes tumor architecture but also knows the patient’s molecular profile, radiologic pattern, and treatment history — and predicts which therapy will work best.

We’re not there yet clinically, but the foundations are being laid.

Of course, we also discussed the barriers — lack of explainability, slow adoption, and workflow integration challenges. These aren’t minor issues, but they’re not dealbreakers either.

We’re seeing steady movement toward real-world application, and that’s progress.

AI-Assisted Kidney Pathology — When Time Is Everything

Next, we discussed one of my favorite papers from this episode — and a great example of AI in action for high-stakes, time-sensitive pathology.

During organ transplantation, time is critical. Frozen section kidney biopsies need to be reviewed fast to assess viability.

In this study, researchers evaluated an AI-assisted review system (AAR) for donor kidney pathology using H&E-stained frozen sections.

The results were impressive:

- Concordance with ground truth: 98.33%

- AI reduced review time by 54.83% (from 17 minutes to ~8.5 minutes)

- No systematic bias detected in AI-assisted reads

And all this without losing diagnostic accuracy.

The most striking part? The model worked on frozen sections, which are notoriously challenging due to artifacts and lower quality.

If we can integrate this kind of AI seamlessly into digital workflows, we could make organ allocation faster and more objective — potentially saving more lives.

That’s where I see AI as truly transformative: not just improving accuracy, but changing the pace of decision-making.

The Future Is Here — Autonomous AI Agents for Clinical Decision-Making

The last paper felt like science fiction made real.

Researchers introduced a GPT-4–based multimodal AI agent that integrates:

- Vision transformers for pathology slides,

- Language models for reasoning and literature retrieval,

- Radiologic segmentation via MedSAM,

- And RAG (retrieval-augmented generation) for accessing external databases like PubMed or EncoKB.

They tested it on 20 real multimodal oncology cases, and the agent significantly outperformed GPT-4 alone in accuracy and clinical reasoning.

It could even independently select the right tools for each task — a glimpse into how autonomous agents might soon support pathologists and oncologists in personalized treatment planning.

We’re not ready to hand over the sign-out pen just yet 🖋️, but the groundwork for AI-assisted, context-aware decision-making is being built.

Community, Connection, and the Human Touch

No DigiPath Digest would be complete without community updates.

I shared that I’ll be presenting at the Society of Toxicologic Pathology (STP) annual meeting, speaking about digital pathology and AI in neuropathology — an area where I see incredible potential for translational research.

And of course, I showed off my new histology-inspired earrings again. They’ve become more than accessories — they’re conversation starters that help people talk about pathology in everyday settings.

I also reminded everyone that my free Digital Pathology 101 eBook is available on my website (and on Amazon if you prefer print). It’s constantly updated with new AI developments — like these multimodal models we discussed today.

The fact that so many of you tune in early, comment, share, and ask smart, practical questions — that’s what makes this community special.

Final Thoughts

This livestream reminded me why I started doing this in the first place — to make digital pathology accessible, practical, and exciting without overcomplicating it.

AI isn’t replacing us. It’s helping us see more, faster, and more consistently.

It’s the microscope’s next evolution — a thinking companion that learns from data while we provide context and care.

If you missed the livestream, you can find the replay and join our next session at DigitalPathologyPlace.com.

Let’s keep learning, connecting, and building the future of pathology — one slide (and one coffee ☕️) at a time.

Comments are closed.